| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | 11 | 12 | 13 | 14 |

| 15 | 16 | 17 | 18 | 19 | 20 | 21 |

| 22 | 23 | 24 | 25 | 26 | 27 | 28 |

| 29 | 30 | 31 |

- SW Expert Academy

- 편스토랑 우승상품

- 프로그래머스

- hackerrank

- ChatGPT

- github

- 편스토랑

- 코로나19

- PYTHON

- ubuntu

- Git

- 파이썬

- 맥북

- programmers

- 프로그래머스 파이썬

- AI 경진대회

- 백준

- leetcode

- Real or Not? NLP with Disaster Tweets

- 우분투

- Kaggle

- Docker

- Baekjoon

- dacon

- 데이콘

- 더현대서울 맛집

- 금융문자분석경진대회

- 캐치카페

- 자연어처리

- gs25

- Today

- Total

솜씨좋은장씨

[Kaggle DAY23]Real or Not? NLP with Disaster Tweets! 본문

[Kaggle DAY23]Real or Not? NLP with Disaster Tweets!

솜씨좋은장씨 2020. 3. 22. 06:10Kaggle 도전 23회차!

오늘은 DACON에서 주최했던 지난 KB 금융문자분석 경진대회에서 1위를 한 스팸구이 팀의 방법을 벤치마킹하여

도전해보았습니다.

hotorch/Dacon_14th_Competition_code

Dacon 14th Competition 1st Place- "Financial smishing character analysis" - hotorch/Dacon_14th_Competition_code

github.com

TF-IDF 에서 TF에 1+log(TF) 한 방식을 사용하였고

lightGBM모델에 GridSearchCV를 통한 최적화를 실시하였습니다.

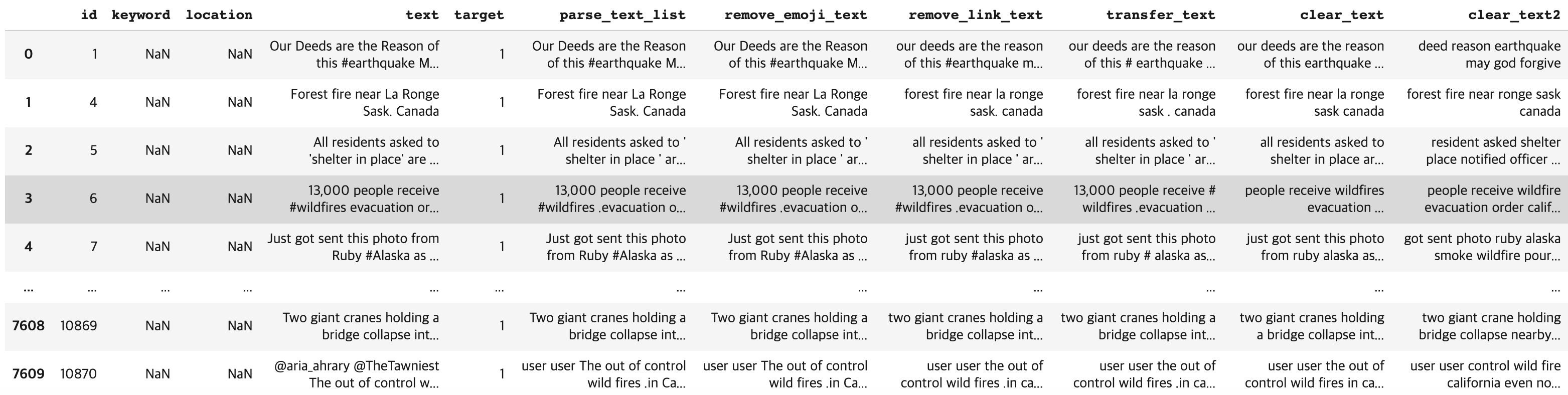

데이터 전처리방식은 다음과 같습니다.

he's -> he is / fromåÊwounds -> from wounds 와 같이 바꾸어 주기

=> 이모티콘 제거

=> 링크 제거

=> 약어를 다시 풀어주기

=> 신이름 god로 통일하기

=> \n, \t제거하기

=> 특수문자 제거하기

=> 숫자제거하기

=> 세번이상 반복되는 알파벳은 해당 알파벳을 한번만 사용하도록 바꾸어주기

=> nltk의 TreebankWordTokenizer로 토큰화

=> 토큰화한 단어중 길이가 3이상인 단어만 남겨두기

=> 불용어처리

=> lemmatizing

=> ' '.join(word_list)를 통해 다시 문장으로 만들기

import re

clear_text_list = list(train['clear_text'])

X_train = []

for clear_text in clear_text_list:

word_list = word_tokenize(clear_text)

word_list = [word for word in word_list if len(word) > 2]

word_list = [word for word in word_list if word not in stop_words]

# word_list = [stemmer.stem(word) for word in word_list]

word_list = [lemmatizer.lemmatize(word) for word in word_list]

X_train.append(' '.join(word_list))

X_train[:7]

train['clear_text2'] = X_train

train

TF-IDF

import os

import pandas as pd

from sklearn.feature_extraction.text import TfidfVectorizertweets = list(train['clear_text2'])

real_or_not = list(train['target'])

vectorizer = TfidfVectorizer(

min_df= 2,

analyzer="word",

sublinear_tf=True,

ngram_range=(1,3),

max_features=10000,

lowercase=False,

use_idf=True

)

X_data = vectorizer.fit_transform(tweets)여기서 sublinear_tf = True 로 설정하게되면 1 + log(TF) 값을 사용할 수 있게됩니다.

최대 feature의 개수를 10,000개로 설정해주었습니다.

이 10,000개로 설정한 feature수가 10시간의 고생이 슬픔으로 바뀌는 결정적인 원인이 되었습니다.

tweets2 = list(test['clear_text2'])

X_test = vectorizer.transform(tweets2)vectorizer.vocabulary_.items()

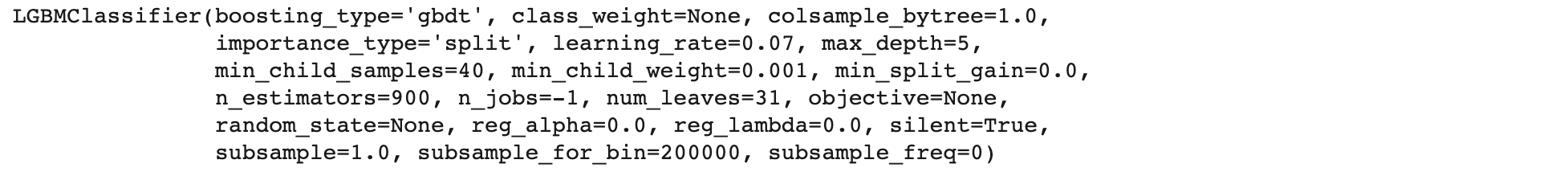

GridSearchCV + LightGBM

from sklearn.model_selection import GridSearchCV

def get_best_params(model, params):

grid_model = GridSearchCV(model, param_grid=params, scoring='neg_mean_squared_error', cv=5, verbose=1)

grid_model.fit(X_data, y)

rmse = np.sqrt(-1* grid_model.best_score_)

print('최적 평균 RMSE 값:', np.round(rmse, 4))

print('최적 파라미터:', grid_model.best_params_)

return grid_model.best_estimator_lgb_param_grid = {

'n_estimators' : [100, 200, 300, 400, 500, 600, 700, 800, 900, 1000],

'max_depth' : [5, 10, 15, 20, 25, 30],

'learning_rate' : [0.001, 0.005, 0.007, 0.01, 0.02, 0.03, 0.04, 0.05, 0.06, 0.07, 0.08, 0.09, 0.1],

'min_child_samples': [10, 20, 30, 40, 50, 60, 70]

}from lightgbm import LGBMClassifier

my_lgb_model = LGBMClassifier(random_state=42)%%time

best_param = get_best_params(my_lgb_model, lgb_param_grid)

GridSearchCV 를 통해서 최적의 파라미터를 구해보았습니다.

10시간 23분만에

- learning_rate : 0.04

- max_depth : 15

- min_child_samples : 40

- n_estimators : 400

이라는 결과를 얻을 수 있었습니다.

첫번째 제출

my_lgb_model = LGBMClassifier(learning_rate=0.04, max_depth=15, min_child_samples=40, n_estimators=400)

my_lgb_model.fit(X_data, y)

predict = my_lgb_model.predict(X_test)

predict_labels = predict

ids = list(test['id'])

print(len(ids))

submission_dic = {"id":ids, "target":predict_labels}

submission_df = pd.DataFrame(submission_dic)

submission_df.to_csv("kaggle_day23.csv", index=False)여기서 X_test도 10,000개로 바꾸려고하니... 7,097개의 feature만 가지고 있었습니다.

그래서 다시 TF-IDF를 만들어줄때 7,097로 바꾸어 설정한 뒤 결과를 내보았습니다.

10,000개의 feature에 맞게 10시간동안 최적의 파라미터를 찾았는데 제대로 사용을 해보지 못해 아쉬웠습니다.

결과

그래도 이전에 lightGBM으로 제출했던 40~50퍼센트의 결과와 다르게 이번에는 꽤 높은 스코어를 얻을 수 있었습니다.

이번에는 다시 max_feautures를 7,097로 설정하여 만든 TF-IDF 데이터를 가지고 최적의 파라미터를 찾아보았습니다.

tweets = list(train['clear_text2'])

real_or_not = list(train['target'])

vectorizer = TfidfVectorizer(

min_df= 2,

analyzer="word",

sublinear_tf=True,

ngram_range=(1,3),

max_features=7097,

lowercase=False,

use_idf=True

)

X_data = vectorizer.fit_transform(tweets)lgb_param_grid = {

'n_estimators' : [400, 500, 600, 700, 800, 900, 1000],

'max_depth' : [5, 10, 15, 20, 25, 30],

'learning_rate' : [0.001, 0.005, 0.007, 0.01, 0.02, 0.03, 0.04, 0.05, 0.06, 0.07, 0.08, 0.09, 0.1],

'min_child_samples': [10, 20, 30, 40, 50, 60, 70]

}%%time

my_lgb_model2 = LGBMClassifier(random_state=42)

best_param = get_best_params(my_lgb_model2, lgb_param_grid)

여기에는 약 11시간 30분 가까이의 시간이 소요되었습니다.

중간에 Colab이 끊어질까 노심초사하느라 잠도 제대로 못잤습니다.

여기서 얻은 파라미터는

- learning_rate : 0.07

- max_depth : 5

- min_child_samples : 40

- n_estimators : 900

이었습니다.

이를 바탕으로 결과를 내보았습니다.

두번째 제출

my_lgb_model2 = LGBMClassifier(learning_rate=0.07, max_depth=5, min_child_samples=40, n_estimators=900)

my_lgb_model2.fit(X_data, y)

predict = my_lgb_model2.predict(X_test)

predict_labels = predict

ids = list(test['id'])

print(len(ids))

submission_dic = {"id":ids, "target":predict_labels}

submission_df = pd.DataFrame(submission_dic)

submission_df.to_csv("kaggle_day23_2.csv", index=False)결과

도합 약 21시간의 결과는 썩 좋지 못한 것 같았습니다.

lightGBM 모델은 깔끔하게 포기하고 이제 며칠 남지 않았으니

가장 좋은 결과를 내었던 BERT 모델을 활용하기로 했습니다.

여기에 더하여 캐글 노트북들을 보다가 발견한 misslabeled된 데이터를 다시 라벨링을 해주는 과정을 추가해주었습니다.

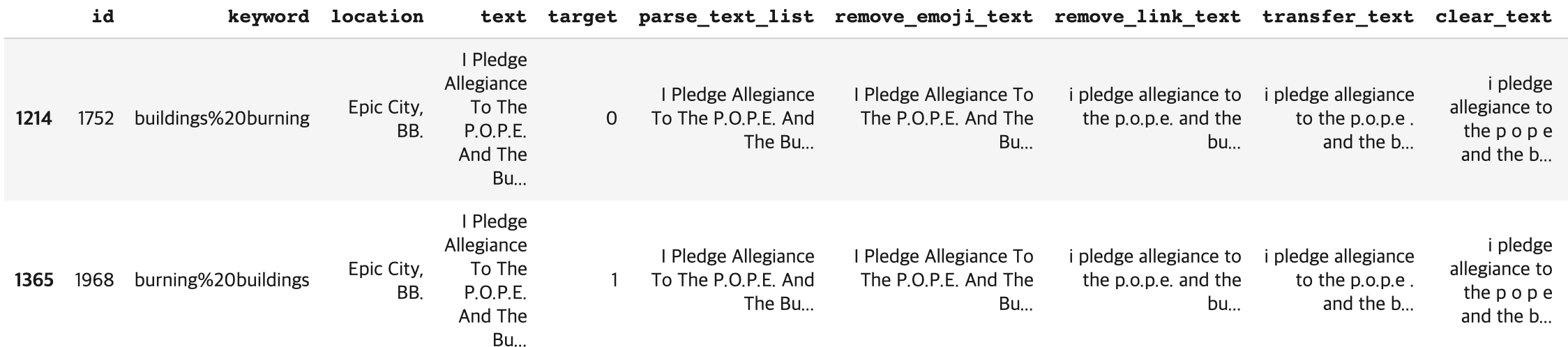

먼저 mislabeled 된 데이터를 다시 라벨링하는 것을 보면

df_mislabeled = train.groupby(['text']).nunique().sort_values(by='target', ascending=False)

df_mislabeled = df_mislabeled[df_mislabeled['target'] > 1]['target']

df_mislabeled.index.tolist()

18개의 트윗이 중복으로 2개이상 씩 존재하지만 각각의 데이터는 라벨이 1인것도 있고 0인 것도 있어 학습에 혼란을 주는 것 같았습니다.

train[train['text'] == 'I Pledge Allegiance To The P.O.P.E. And The Burning Buildings of Epic City. ??????']

한가지 예를 들어보면 다음과 같이 같은 트윗의 내용이 0과 1 두가지로 라벨링 된 것을 볼 수 있었습니다.

train['target_relabeled'] = train['target'].copy()

train.loc[train['text'] == 'like for the music video I want some real action shit like burning buildings and police chases not some weak ben winston shit', 'target_relabeled'] = 0

train.loc[train['text'] == 'Hellfire is surrounded by desires so be careful and donÛªt let your desires control you! #Afterlife', 'target_relabeled'] = 0

train.loc[train['text'] == 'To fight bioterrorism sir.', 'target_relabeled'] = 0

train.loc[train['text'] == '.POTUS #StrategicPatience is a strategy for #Genocide; refugees; IDP Internally displaced people; horror; etc. https://t.co/rqWuoy1fm4', 'target_relabeled'] = 1

train.loc[train['text'] == 'CLEARED:incident with injury:I-495 inner loop Exit 31 - MD 97/Georgia Ave Silver Spring', 'target_relabeled'] = 1

train.loc[train['text'] == '#foodscare #offers2go #NestleIndia slips into loss after #Magginoodle #ban unsafe and hazardous for #humanconsumption', 'target_relabeled'] = 0

train.loc[train['text'] == 'In #islam saving a person is equal in reward to saving all humans! Islam is the opposite of terrorism!', 'target_relabeled'] = 0

train.loc[train['text'] == 'Who is bringing the tornadoes and floods. Who is bringing the climate change. God is after America He is plaguing her\n \n#FARRAKHAN #QUOTE', 'target_relabeled'] = 1

train.loc[train['text'] == 'RT NotExplained: The only known image of infamous hijacker D.B. Cooper. http://t.co/JlzK2HdeTG', 'target_relabeled'] = 1

train.loc[train['text'] == "Mmmmmm I'm burning.... I'm burning buildings I'm building.... Oooooohhhh oooh ooh...", 'target_relabeled'] = 0

train.loc[train['text'] == "wowo--=== 12000 Nigerian refugees repatriated from Cameroon", 'target_relabeled'] = 0

train.loc[train['text'] == "He came to a land which was engulfed in tribal war and turned it into a land of peace i.e. Madinah. #ProphetMuhammad #islam", 'target_relabeled'] = 0

train.loc[train['text'] == "Hellfire! We donÛªt even want to think about it or mention it so letÛªs not do anything that leads to it #islam!", 'target_relabeled'] = 0

train.loc[train['text'] == "The Prophet (peace be upon him) said 'Save yourself from Hellfire even if it is by giving half a date in charity.'", 'target_relabeled'] = 0

train.loc[train['text'] == "Caution: breathing may be hazardous to your health.", 'target_relabeled'] = 1

train.loc[train['text'] == "I Pledge Allegiance To The P.O.P.E. And The Burning Buildings of Epic City. ??????", 'target_relabeled'] = 0

train.loc[train['text'] == "#Allah describes piling up #wealth thinking it would last #forever as the description of the people of #Hellfire in Surah Humaza. #Reflect", 'target_relabeled'] = 0

train.loc[train['text'] == "that horrible sinking feeling when youÛªve been at home on your phone for a while and you realise its been on 3G this whole time", 'target_relabeled'] = 0NLP with Disaster Tweets - EDA, Cleaning and BERT

Explore and run machine learning code with Kaggle Notebooks | Using data from multiple data sources

www.kaggle.com

위의 링크를 참고하여 라벨링을 다시 해주었습니다.

이제 이 데이터와 BERT 모델을 가지고 학습하고 결과를 도출해보았습니다.

path = '/content/mnt/My Drive/Colab Notebooks/Kaggle/bert'import tensorflow as tf

import pandas as pd

import numpy as np

import re

import pickle

import keras as keras

from keras.models import load_model

from keras import backend as K

from keras import Input, Model

from keras import optimizers

import codecs

from tqdm import tqdm

import shutil

import osimport warnings

import tensorflow as tf

warnings.filterwarnings(action='ignore')

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '3'

tf.logging.set_verbosity(tf.logging.ERROR)!pip install keras-bert

!pip install keras-radamfrom keras_bert import load_trained_model_from_checkpoint, load_vocabulary

from keras_bert import Tokenizer

from keras_bert import AdamWarmup, calc_train_steps

from keras_radam import RAdampretrained_path =path

config_path = os.path.join(pretrained_path, 'bert_config.json')

checkpoint_path = os.path.join(pretrained_path, 'bert_model.ckpt')

vocab_path = os.path.join(pretrained_path, 'vocab.txt')

DATA_COLUMN = "clear_text2"

LABEL_COLUMN = "target_relabeled"token_dict = {}

with codecs.open(vocab_path, 'r', 'utf8') as reader:

for line in reader:

token = line.strip()

if "_" in token:

token = token.replace("_","")

token = "##" + token

token_dict[token] = len(token_dict)class inherit_Tokenizer(Tokenizer):

def _tokenize(self, text):

if not self._cased:

text = text

text = text.lower()

spaced = ''

for ch in text:

if self._is_punctuation(ch) or self._is_cjk_character(ch):

spaced += ' ' + ch + ' '

elif self._is_space(ch):

spaced += ' '

elif ord(ch) == 0 or ord(ch) == 0xfffd or self._is_control(ch):

continue

else:

spaced += ch

tokens = []

for word in spaced.strip().split():

tokens += self._word_piece_tokenize(word)

return tokensdef convert_data(data_df):

global tokenizer

indices, targets = [], []

for i in tqdm(range(len(data_df))):

ids, segments = tokenizer.encode(data_df[DATA_COLUMN][i], max_len=SEQ_LEN)

indices.append(ids)

targets.append(data_df[LABEL_COLUMN][i])

items = list(zip(indices, targets))

indices, targets = zip(*items)

indices = np.array(indices)

return [indices, np.zeros_like(indices)], np.array(targets)

def load_data(pandas_dataframe):

data_df = pandas_dataframe

data_df[DATA_COLUMN] = data_df[DATA_COLUMN].astype(str)

data_x, data_y = convert_data(data_df)

return data_x, data_ytrain_x, train_y = load_data(train)

layer_num = 12

model = load_trained_model_from_checkpoint(

config_path,

checkpoint_path,

training=True,

trainable=True,

seq_len=SEQ_LEN,)def get_bert_finetuning_model(model):

inputs = model.inputs[:2]

dense = model.layers[-3].output

outputs = keras.layers.Dense(2, activation='sigmoid',kernel_initializer=keras.initializers.TruncatedNormal(stddev=0.02),

name = 'real_output')(dense)

bert_model = keras.models.Model(inputs, outputs)

bert_model.compile(

optimizer=RAdam(learning_rate=6e-6, weight_decay=0.0025),

loss='binary_crossentropy',

metrics=['accuracy'])

return bert_modeltrain_y_new = []

for i in range(len(train_y)):

if train_y[i] == 1:

train_y_new.append([0, 1])

else:

train_y_new.append([1, 0])train_y_new = np.array(train_y_new)def predict_convert_data(data_df):

global tokenizer

indices = []

for i in tqdm(range(len(data_df))):

ids, segments = tokenizer.encode(data_df[DATA_COLUMN][i], max_len=SEQ_LEN)

indices.append(ids)

items = indices

indices = np.array(indices)

return [indices, np.zeros_like(indices)]

def predict_load_data(x): #Pandas Dataframe을 인풋으로 받는다

data_df = x

data_df[DATA_COLUMN] = data_df[DATA_COLUMN].astype(str)

data_x = predict_convert_data(data_df)

return data_xtest_set = predict_load_data(test)

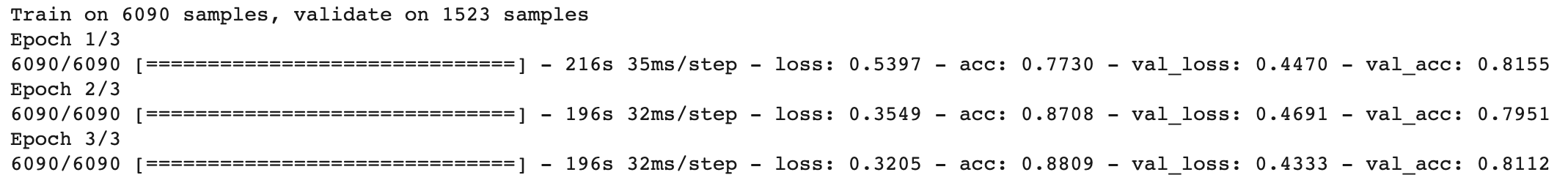

세번째 제출

sess = K.get_session()

uninitialized_variables = set([i.decode('ascii') for i in sess.run(tf.report_uninitialized_variables())])

init = tf.variables_initializer([v for v in tf.global_variables() if v.name.split(':')[0] in uninitialized_variables])

sess.run(init)

bert_model = get_bert_finetuning_model(model)

history = bert_model.fit(train_x, train_y_new, epochs=3, batch_size=16, verbose = 1, validation_split=0.2, shuffle=True)

preds = bert_model.predict(test_set)

predict_labels = np.argmax(preds, axis=1)

for i in range(len(predict_labels)):

predict_labels[i] = predict_labels[i]

ids = list(test['id'])

submission_dic = {"id":ids, "target":predict_labels}

submission_df = pd.DataFrame(submission_dic)

submission_df.to_csv("kaggle_day23_3.csv", index=False)결과

간만에 0.81을 넘는 점수를 받아 기분이 좋았습니다.

네번째 제출

sess = K.get_session()

uninitialized_variables = set([i.decode('ascii') for i in sess.run(tf.report_uninitialized_variables())])

init = tf.variables_initializer([v for v in tf.global_variables() if v.name.split(':')[0] in uninitialized_variables])

sess.run(init)

bert_model2 = get_bert_finetuning_model(model)

history = bert_model2.fit(train_x, train_y_new, epochs=3, batch_size=32, verbose = 1, validation_split=0.2, shuffle=True)

preds = bert_model2.predict(test_set)

predict_labels = np.argmax(preds, axis=1)

for i in range(len(predict_labels)):

predict_labels[i] = predict_labels[i]

ids = list(test['id'])

submission_dic = {"id":ids, "target":predict_labels}

submission_df = pd.DataFrame(submission_dic)

submission_df.to_csv("kaggle_day23_4.csv", index=False)결과

나머지 2일은 BERT 모델을 최적화하는데에 최선을 다해보려합니다.

읽어주셔서 감사합니다.

'Kaggle > Real or Not? NLP with Disaster Tweets' 카테고리의 다른 글

| [Kaggle DAY24]Real or Not? NLP with Disaster Tweets! (0) | 2020.03.22 |

|---|---|

| [Kaggle DAY22]Real or Not? NLP with Disaster Tweets! (0) | 2020.03.21 |

| [Kaggle DAY21]Real or Not? NLP with Disaster Tweets! (0) | 2020.03.19 |

| [Kaggle DAY20]Real or Not? NLP with Disaster Tweets! (0) | 2020.03.18 |

| [Kaggle DAY19]Real or Not? NLP with Disaster Tweets! (0) | 2020.03.17 |