Notice

Recent Posts

Recent Comments

| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | |||

| 5 | 6 | 7 | 8 | 9 | 10 | 11 |

| 12 | 13 | 14 | 15 | 16 | 17 | 18 |

| 19 | 20 | 21 | 22 | 23 | 24 | 25 |

| 26 | 27 | 28 | 29 | 30 | 31 |

Tags

- AI 경진대회

- programmers

- Git

- 편스토랑

- Real or Not? NLP with Disaster Tweets

- ChatGPT

- SW Expert Academy

- dacon

- Kaggle

- ubuntu

- 데이콘

- 캐치카페

- 프로그래머스 파이썬

- Docker

- github

- PYTHON

- 자연어처리

- 맥북

- 코로나19

- leetcode

- 더현대서울 맛집

- hackerrank

- 우분투

- gs25

- 프로그래머스

- 금융문자분석경진대회

- 파이썬

- Baekjoon

- 편스토랑 우승상품

- 백준

Archives

- Today

- Total

솜씨좋은장씨

[Kaggle DAY22]Real or Not? NLP with Disaster Tweets! 본문

Kaggle/Real or Not? NLP with Disaster Tweets

[Kaggle DAY22]Real or Not? NLP with Disaster Tweets!

솜씨좋은장씨 2020. 3. 21. 07:32728x90

반응형

Kaggle 도전 22회차!

오늘은 아르바이트를 다녀온 후 시간이 빠듯하여 그동안 제출했던 모델중에 가장 결과가 좋았던 모델들에

바뀐 데이터 전처리방식을 적용한 데이터를 활용하여 학습하고 결과를 도출해보았습니다.

데이터 전처리방식은 21회차와 동일합니다.

from keras.preprocessing.text import Tokenizer

max_words = 12396

tokenizer = Tokenizer(num_words = max_words)

tokenizer.fit_on_texts(X_train)

X_train_vec = tokenizer.texts_to_sequences(X_train)

X_test_vec = tokenizer.texts_to_sequences(X_test)import matplotlib.pyplot as plt

print("문자의 최대 길이 :" , max(len(l) for l in X_train_vec))

print("문자의 평균 길이 : ", sum(map(len, X_train_vec))/ len(X_train_vec))

plt.hist([len(s) for s in X_train_vec], bins=50)

plt.xlabel('length of Data')

plt.ylabel('number of Data')

plt.show()

from keras.utils import np_utils

import numpy as np

y_train = []

for i in range(len(train['target'])):

if train['target'].iloc[i] == 1:

y_train.append([0, 1])

elif train['target'].iloc[i] == 0:

y_train.append([1, 0])

y_train = np.array(y_train)from keras.layers import Embedding, Dense, LSTM, GRU, Dropout, Flatten, Conv1D, GlobalMaxPooling1D

from keras.models import Sequential

from keras.preprocessing.sequence import pad_sequencesmax_len = 21

X_train_vec = pad_sequences(X_train_vec, maxlen=max_len)

X_test_vec = pad_sequences(X_test_vec, maxlen=max_len)첫번째 제출

from keras import optimizers

adam2 = optimizers.Adam(lr=0.05, decay=0.1)

model_3 = Sequential()

model_3.add(Embedding(max_words, 100))

model_3.add(GRU(32))

model_3.add(Dropout(0.5))

model_3.add(Dense(2, activation='sigmoid'))

model_3.compile(loss='binary_crossentropy', optimizer=adam2, metrics=['acc'])

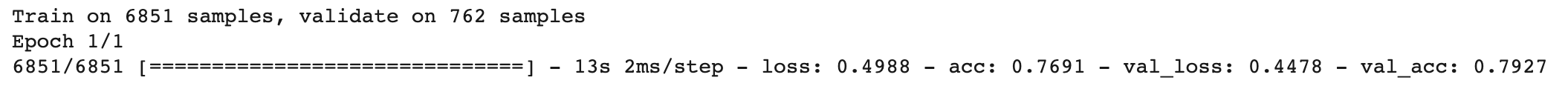

history = model_3.fit(X_train_vec, y_train, batch_size=16, epochs=1, validation_split=0.1)

predict = model_3.predict(X_test_vec)

predict_labels = np.argmax(predict, axis=1)

for i in range(len(predict_labels)):

predict_labels[i] = predict_labels[i]

ids = list(test['id'])

submission_dic = {"id":ids, "target":predict_labels}

submission_df = pd.DataFrame(submission_dic)

submission_df.to_csv("kaggle_day22.csv", index=False)결과

두번째 제출

model2 = Sequential()

model2.add(Embedding(max_words, 100, input_length=21))

model2.add(Flatten())

model2.add(Dense(128, activation='relu'))

model2.add(Dense(2, activation='sigmoid'))

model2.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy'])

history = model2.fit(X_train_vec, y_train, epochs=1, batch_size=32, validation_split=0.1)

predict = model2.predict(X_test_vec)

predict_labels = np.argmax(predict, axis=1)

for i in range(len(predict_labels)):

predict_labels[i] = predict_labels[i]

ids = list(test['id'])

submission_dic = {"id":ids, "target":predict_labels}

submission_df = pd.DataFrame(submission_dic)

submission_df.to_csv("kaggle_day22_2.csv", index=False)결과

세번째 제출

model2 = Sequential()

model2.add(Embedding(max_words, 128, input_length=21))

model2.add(Dropout(0.2))

model2.add(Conv1D(256, 3, padding='valid', activation='relu', strides=1))

model2.add(GlobalMaxPooling1D())

model2.add(Dense(32, activation='relu'))

model2.add(Dropout(0.2))

model2.add(Dense(2, activation='sigmoid'))

model2.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy'])

history2 = model2.fit(X_train_vec, y_train, epochs=1, batch_size=16, validation_split=0.1)

predict = model2.predict(X_test_vec)

predict_labels = np.argmax(predict, axis=1)

for i in range(len(predict_labels)):

predict_labels[i] = predict_labels[i]

ids = list(test['id'])

submission_dic = {"id":ids, "target":predict_labels}

submission_df = pd.DataFrame(submission_dic)

submission_df.to_csv("kaggle_day22_3.csv", index=False)결과

네번째 제출

adam3 = optimizers.Adam(lr=0.03, decay=0.1)

model_4 = Sequential()

model_4.add(Embedding(max_words, 100))

model_4.add(GRU(32))

model_4.add(Dropout(0.5))

model_4.add(Dense(2, activation='sigmoid'))

model_4.compile(loss='binary_crossentropy', optimizer=adam2, metrics=['acc'])

history = model_4.fit(X_train_vec, y_train, batch_size=20, epochs=1, validation_split=0.1)

predict = model_4.predict(X_test_vec)

predict_labels = np.argmax(predict, axis=1)

for i in range(len(predict_labels)):

predict_labels[i] = predict_labels[i]

ids = list(test['id'])

submission_dic = {"id":ids, "target":predict_labels}

submission_df = pd.DataFrame(submission_dic)

submission_df.to_csv("kaggle_day22_4.csv", index=False)결과

다섯번째 제출

adam2 = optimizers.Adam(lr=0.05, decay=0.1)

model_3 = Sequential()

model_3.add(Embedding(max_words, 100))

model_3.add(GRU(32))

model_3.add(Dropout(0.1))

model_3.add(Dense(2, activation='sigmoid'))

model_3.compile(loss='binary_crossentropy', optimizer=adam2, metrics=['acc'])

history = model_3.fit(X_train_vec, y_train, batch_size=16, epochs=1, validation_split=0.1)

predict = model_3.predict(X_test_vec)

predict_labels = np.argmax(predict, axis=1)

for i in range(len(predict_labels)):

predict_labels[i] = predict_labels[i]

ids = list(test['id'])

submission_dic = {"id":ids, "target":predict_labels}

submission_df = pd.DataFrame(submission_dic)

submission_df.to_csv("kaggle_day22_5.csv", index=False)결과

'Kaggle > Real or Not? NLP with Disaster Tweets' 카테고리의 다른 글

| [Kaggle DAY24]Real or Not? NLP with Disaster Tweets! (0) | 2020.03.22 |

|---|---|

| [Kaggle DAY23]Real or Not? NLP with Disaster Tweets! (2) | 2020.03.22 |

| [Kaggle DAY21]Real or Not? NLP with Disaster Tweets! (0) | 2020.03.19 |

| [Kaggle DAY20]Real or Not? NLP with Disaster Tweets! (0) | 2020.03.18 |

| [Kaggle DAY19]Real or Not? NLP with Disaster Tweets! (0) | 2020.03.17 |

Comments